In this article, we are fine tuning the LRASPP MobileNetV3 model on the entire IDD (Indian Driving Dataset) for Semantic Segmentation. ...

Fine Tuning LRASPP MobileNetV3 on the IDD Segmentation Dataset and Exporting to ONNX

In this article, we are fine tuning the LRASPP MobileNetV3 model on the entire IDD (Indian Driving Dataset) for Semantic Segmentation. ...

In this article, we are training the LRASPP MobileNetV3 model on a subset of the Indian Driving Dataset for semantic segmentation. ...

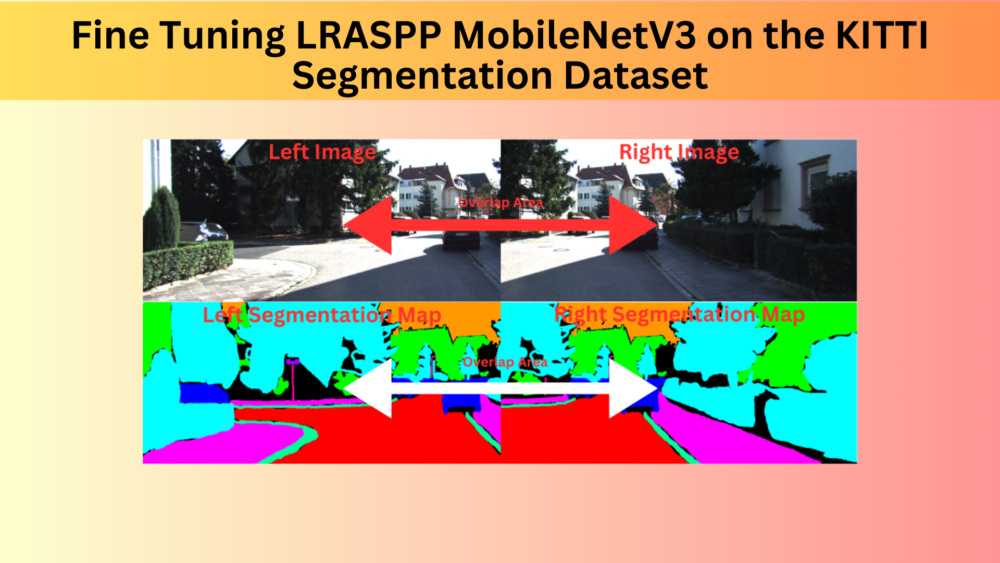

In this article, we will be fine tuning the LRASPP MobileNetV3 segmentation model on the KITTI dataset with two different approaches and compare the results. ...

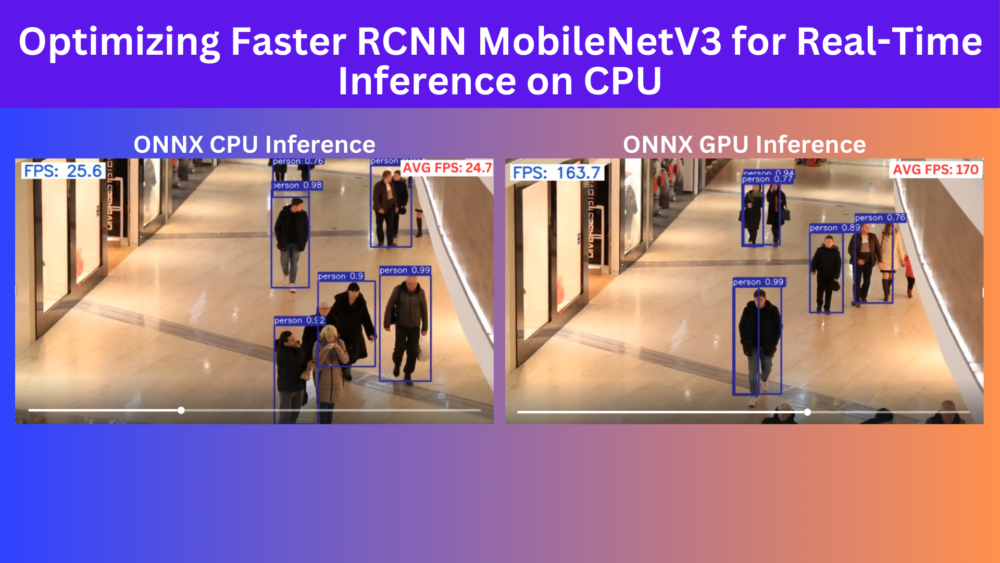

On this article, we are optimizing the Faster RCNN MobileNetV3 model using ONNX for near real-time detection on CPU. ...

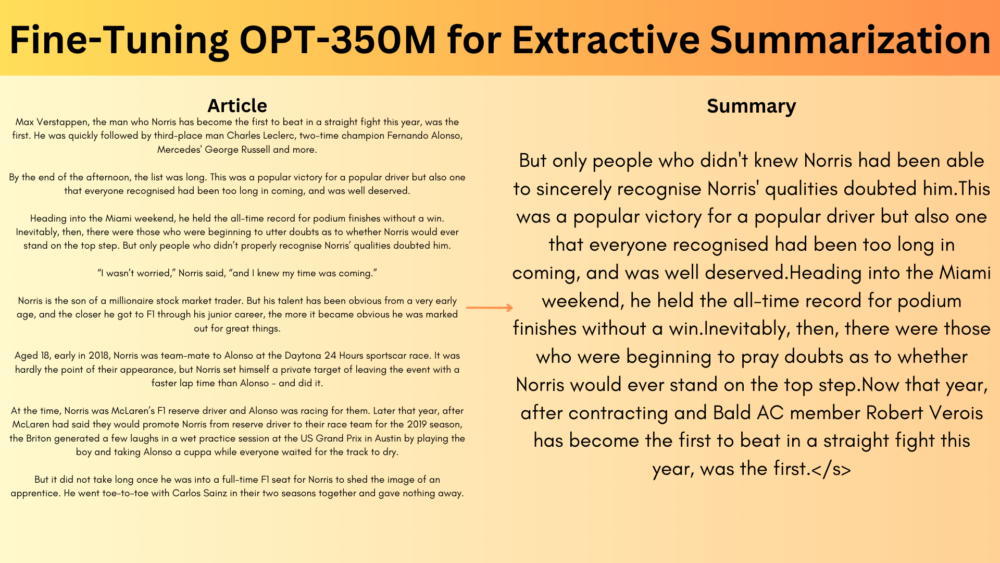

In this article, we fine-tune the OPT-350M for extractive summarization on the BBC News Summary dataset using the Hugging Face Transformers library. ...

Business WordPress Theme copyright 2025