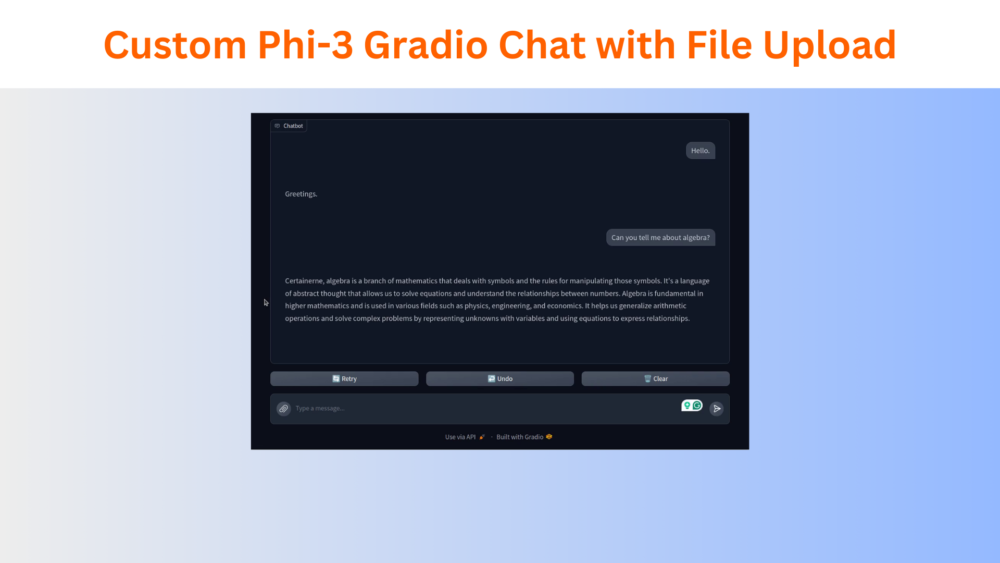

In this article, we create a custom Phi-3 Gradio chat interface with the ability to upload and query files.s ...

Custom Phi-3 Gradio Chat with File Upload

In this article, we create a custom Phi-3 Gradio chat interface with the ability to upload and query files.s ...

In this article, we discuss Phi 1.5 which is a 1.3 billion parameters language model from Microsoft. ...

In this article, we create an instruction following Jupyter Notebook interface to prompt a fine-tuned Phi 1.5 model. ...

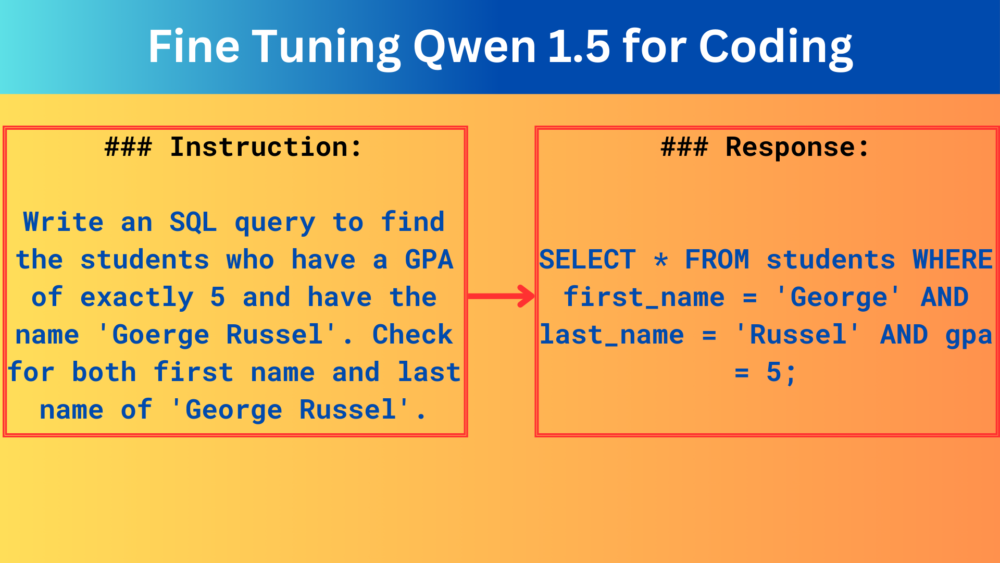

In this article, we are fine tuning the Qwen 1.5 0.5B model on the CodeAlpaca dataset for coding. We use the Hugging Face Transformers SFT Trainer pipeline. ...

In this article, we are fine tuning the Phi 1.5 model using QLoRA on the Stanford Alpaca dataset with Hugging Face Transformers. ...

Business WordPress Theme copyright 2025